Get Started with Bitnami Charts using Minikube

NOTE: This guide focuses on Minikube, but we also have similar guides for Google Kubernetes Engine (GKE) and Amazon Elastic Container Service for Kubernetes (EKS).

Introduction

In recent years, containers have become the definitive way to develop applications because they provide packages that contain everything you need to run your applications: code, runtime, databases, system libraries, etc.

Kubernetes is an open source solution for managing application containers. With Kubernetes, you can decide when your containers should run, increase, or decrease the size of application containers or check the resource consumption of your application deployments. The Kubernetes project is based on Google’s experience working with containers, and it is gaining momentum as the easiest and the most recommended way to manage containers in production.

Applications can be installed in Kubernetes using Helm charts. Helm charts are packages that contain all the information that Kubernetes needs to know for managing a specific application within the cluster.

This guide will walk you, step by step, through the process of deploying applications within a Kubernetes cluster using Bitnami Helm charts.

Introducing Kubernetes

Kubernetes is an open source project designed specifically for container orchestration. Kubernetes offers a number of key features, including multiple storage APIs, container health checks, manual or automatic scaling, rolling upgrades and service discovery. Applications can be installed to a Kubernetes cluster via Helm charts, which provides streamlined package management functions.

If you’re new to this container-centric infrastructure, the easiest way to get started with Kubernetes is with Bitnami Helm charts. Bitnami offers a number of stable, production-ready Helm charts to deploy popular software applications in a Kubernetes cluster.

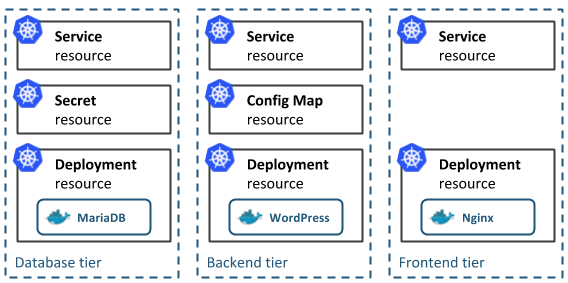

Kubernetes Native App architecture

The architecture of a typical Cloud-Native application consists of 3-tiers: a persistence or database tier, backend tier and frontend tier for your application. In Kubernetes, you define and create multiple resources for each of these tiers, as you can see in the following diagram:

Working with Kubernetes

Kubernetes is platform-agnostic and integrates with a number of different cloud providers, allowing you to pick the platform that best suits your needs. Check Kubernetes official documentation to find out the right solution for you.

There are two different interfaces from which you can manage the resources on your cluster:

Introducing Helm

The official Helm webpage defines Helm as a “package manager for Kubernetes” but, it is more than this. Helm is a tool for managing applications that run in the Kubernetes cluster manager. Helm provides a set of operations that are useful for managing applications, such as: inspect, install, upgrade and delete.

Helm Charts: applications ready-to-deploy

Helm charts are packages of pre-configured Kubernetes resources. A Helm chart describes how to manage a specific application on Kubernetes. It consists of metadata that describes the application, plus the infrastructure needed to operate it in terms of the standard Kubernetes primitives. Each chart references one or more (typically Docker-compatible) container images that contain the application code to be run.

Helm charts contains at least these two elements:

- A description of the package (chart.yml).

- One or more templates, which contains Kubernetes manifest files.

Despite the fact you can run application containers using the Kubernetes command line (kubectl), the easiest way to run workloads in Kubernetes is using the ready-made Helm charts. Helm charts simply indicate to Kubernetes how to perform the application deployment and how to manage the container clusters.

Bitnami provides a set of stable and tested charts that give you the opportunity to deploy popular software application with ease and confidence.

Overview

Minikube is the official way to run Kubernetes locally. It is a tool that runs a single-node Kubernetes cluster inside a Virtual Machine (VM) on your computer. It is an easy way to try out Kubernetes and is also useful for testing and development scenarios.

TIP: For more information on how to run a Kubernetes cluster on other platforms, please refer to our other guides.

In this tutorial, you will learn how to install the needed requirements to run Bitnami applications on Kubernetes using Minikube.

Here are the steps you’ll follow in this tutorial:

- Configure the platform

- Create a Kubernetes cluster

- Install the kubectl command-line tool

- Install Helm

- Install an application using a Helm chart

- Access the Kubernetes Dashboard

- Uninstall an application using Helm

The next sections will walk you through these steps in detail.

Assumptions and prerequisites

This guide focuses on deploying Bitnami applications in a Kubernetes cluster running on Minikube. The example applications are Redis, MongoDB, Odoo and WordPress.

This guide assumes that you have VirtualBox installed on your computer or a similar virtualization software.

Step 1: Configure the platform

The first step for working with Kubernetes clusters is to have Minikube installed if you have selected to work locally.

Install Minikube in your local system, either by using a virtualization software such as VirtualBox or a local terminal.

-

Browse to the Minikube latest releases page.

-

Select the distribution you wish to download depending on your Operating System.

NOTE: This tutorial assumes that you are using Mac OSX or Linux OS. The Minikube installer for Windows is under development. To get an experimental release of Minikube for Windows, check the Minikube releases page.

-

Open a new console window on the local system or open your VirtualBox.

-

To obtain the latest Minikube release, execute the following command depending on your OS. Replace the OS_DISTRIBUTION placeholder with the software distribution for your platform, which are “darwin” for OS X, “linux” for Linux and “windows” for Windows.

$ curl -Lo minikube https://storage.googleapis.com/minikube/releases/latest/minikube-OS_DISTRIBUTION-amd64 && chmod +x minikube && sudo mv minikube /usr/local/bin/

Step 2: Create a Kubernetes cluster

By starting Minikube, a single-node cluster is created. Run the following command in your terminal to complete the creation of the cluster:

$ minikube start

To run your commands against Kubernetes clusters, the kubectl CLI is needed. Check step 3 to complete the installation of kubectl.

Step 3: Install the kubectl command-line tool

In order to start working on a Kubernetes cluster, it is necessary to install the Kubernetes command line (kubectl). Follow these steps to install the kubectl CLI:

-

Execute the following commands to install the kubectl CLI. OS_DISTRIBUTION is a placeholder for the binary distribution of kubectl, remember to replace it with the corresponding distribution for your Operating System (OS).

$ curl -LO https://storage.googleapis.com/kubernetes-release/release/$(curl -s https://storage.googleapis.com/kubernetes-release/release/stable.txt)/bin/OS_DISTRIBUTION/amd64/kubectl $ chmod +x ./kubectl $ sudo mv ./kubectl /usr/local/bin/kubectlTIP: Once the kubectl CLI is installed, you can obtain information about the current version with the kubectl version command.

NOTE: You can also install kubectl by using the sudo apt-get install kubectl command.

-

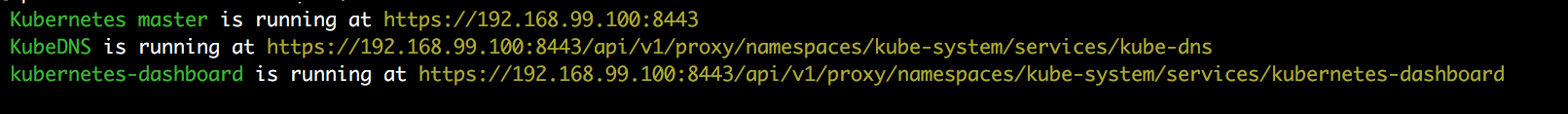

Check that kubectl is correctly installed and configured by running the kubectl cluster-info command:

$ kubectl cluster-infoNOTE: The kubectl cluster-info command shows the IP addresses of the Kubernetes node master and its services.

-

You can also verify the cluster by checking the nodes. Use the following command to list the connected nodes:

$ kubectl get nodes -

To get complete information on each node, run the following:

$ kubectl describe node

Learn more about the kubectl CLI.

Step 4: Install and configure Helm

The easiest way to run and manage applications in a Kubernetes cluster is using Helm. Helm allows you to perform key operations for managing applications such as install, upgrade or delete.

-

To install Helm v3.x, run the following commands:

$ curl https://raw.githubusercontent.com/kubernetes/helm/master/scripts/get-helm-3 > get_helm.sh $ chmod 700 get_helm.sh $ ./get_helm.shTIP: If you are using OS X you can install it with the brew install command: brew install helm.

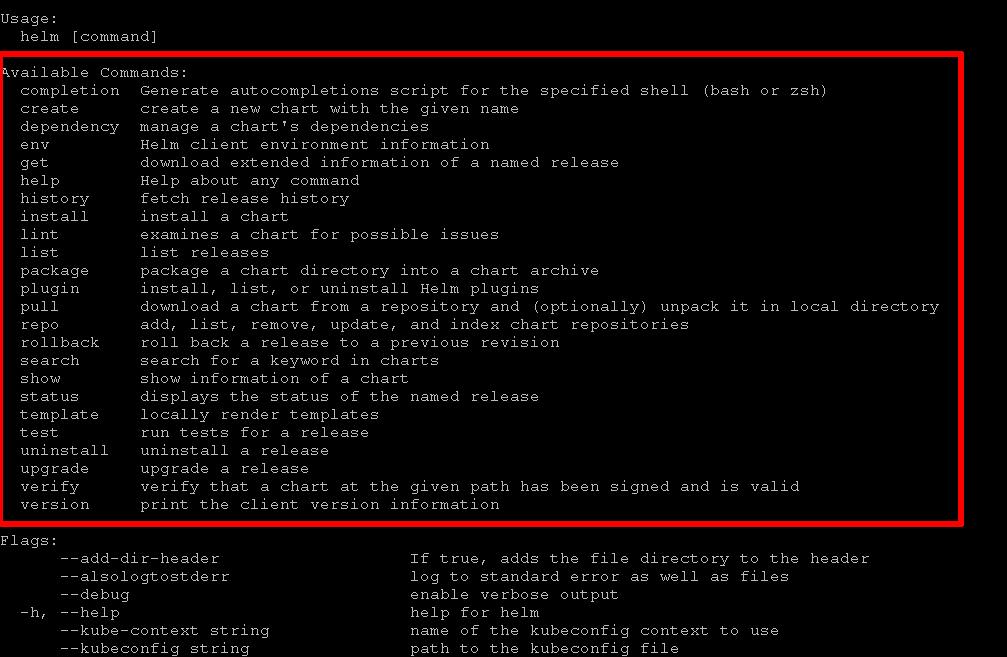

Once you have installed Helm, a set of useful commands to perform common actions is shown below:

Step 5: Install an application using a Helm Chart

A Helm chart describes a specific version of an application, also known as a “release”. The “release” includes files with Kubernetes-needed resources and files that describe the installation, configuration, usage and license of a chart.

By executing the helm install command the application will be deployed on the Kubernetes cluster. You can install more than one chart across the cluster or clusters.

You can find an example of the installation of Redis using Helm charts below:

$ helm install redis oci://registry-1.docker.io/bitnamicharts/redis

NOTE: Check the configurable parameters of the Redis chart and their default values at the GitHub repository.

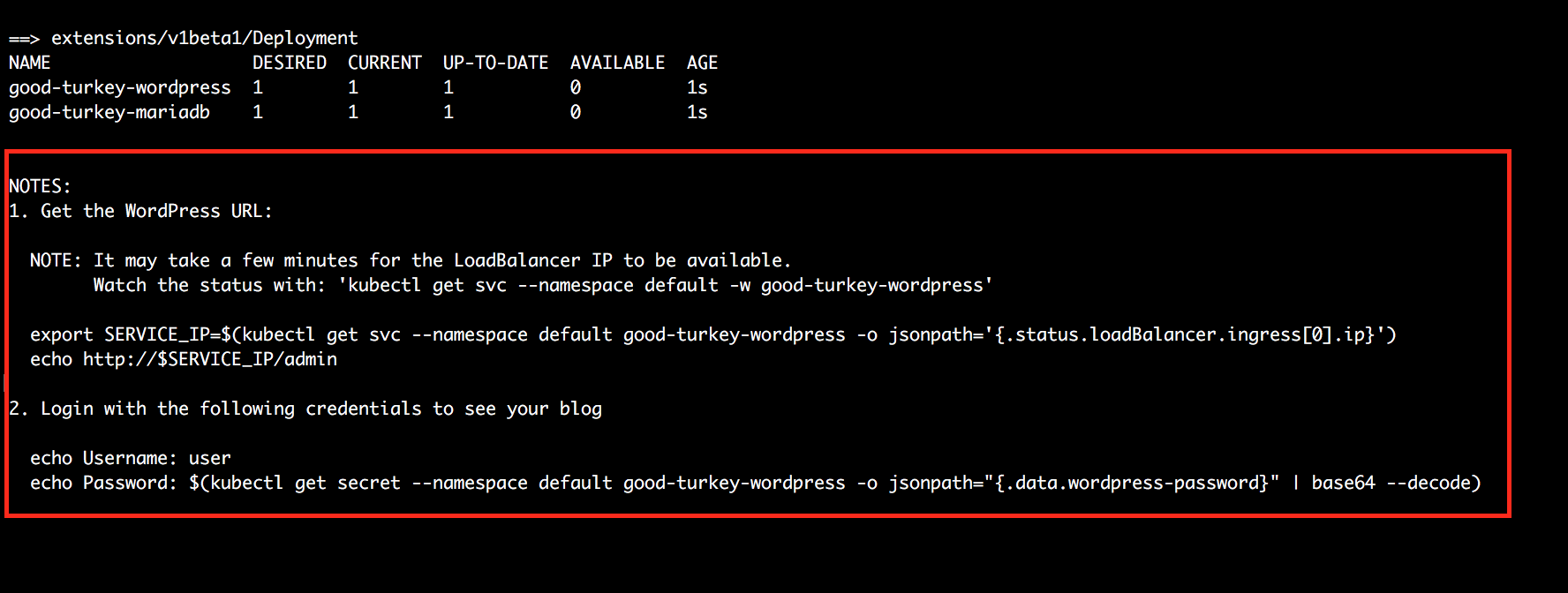

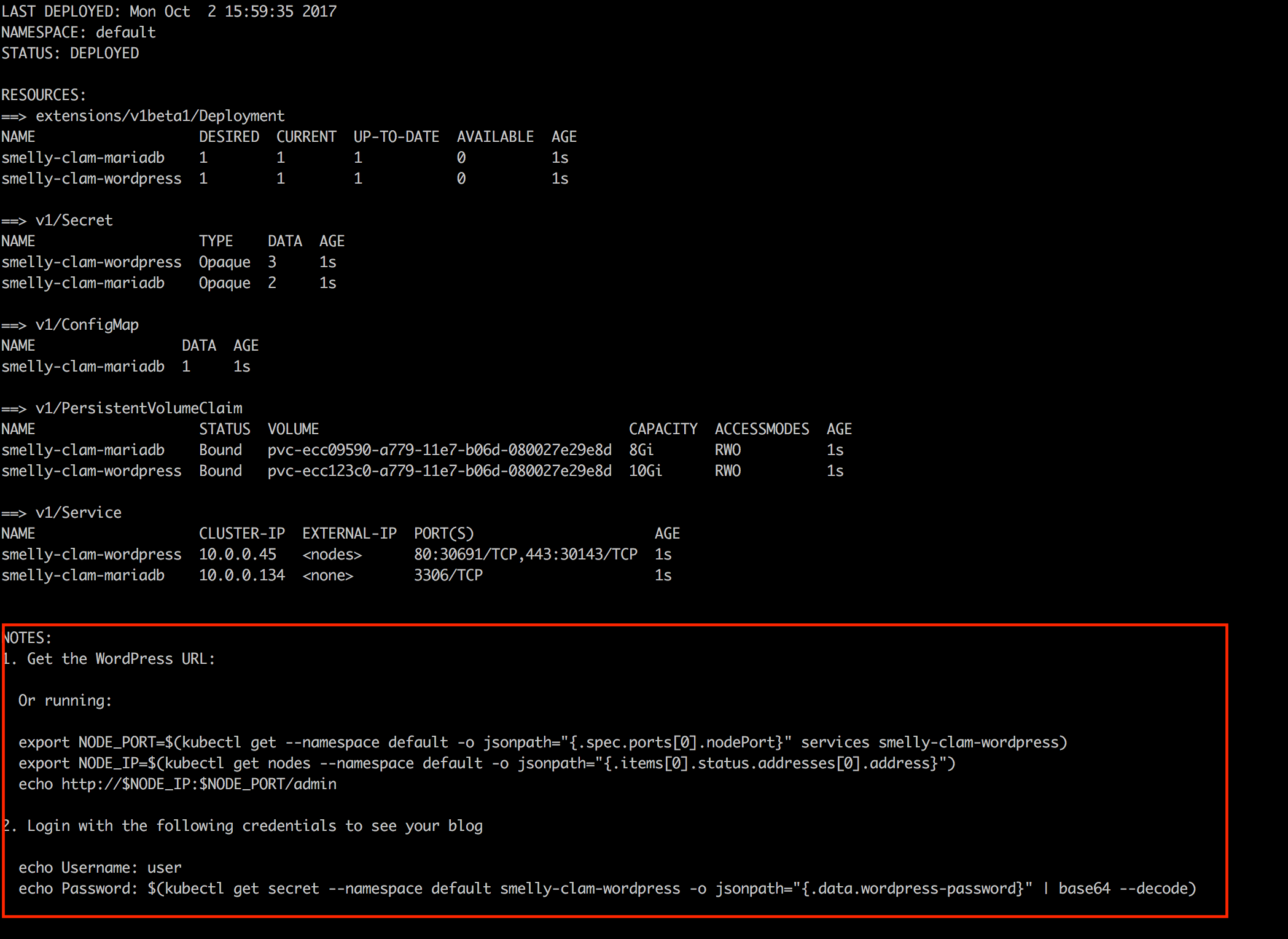

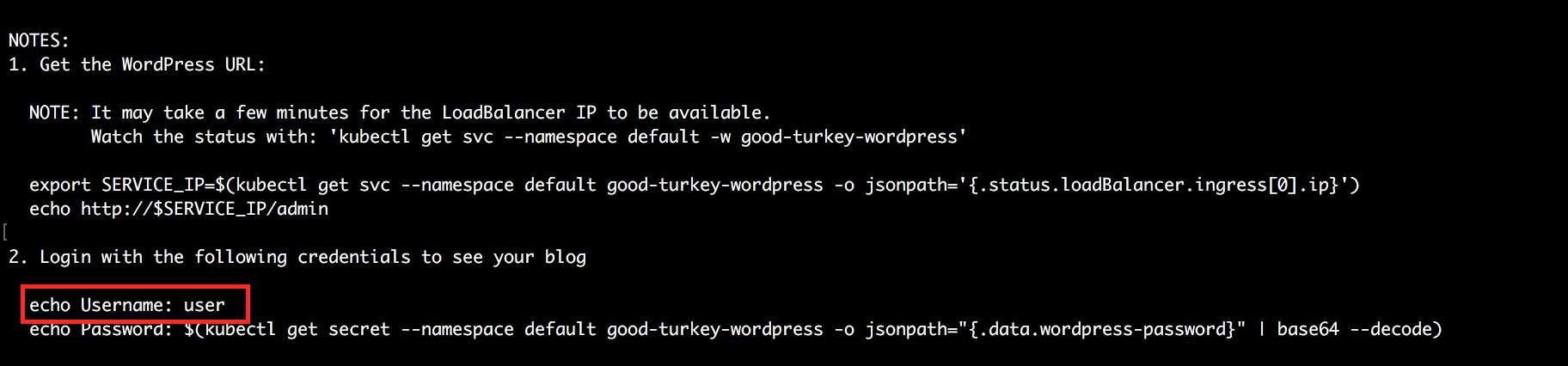

Once you have the chart installed a “Notes” section will be shown at the bottom of the installation information. It contains important instructions about how to obtain your application’s IP address or credentials. Please check it carefully:

TIP: Unlike cloud platforms, Minikube doesn’t support a load balancer so, if you’re deploying the application on Minikube, use the command below instead:

$ helm install redis oci://registry-1.docker.io/bitnamicharts/redis --set serviceType=NodePortYou should see output similar to that shown below as the chart is installed on Minikube.

IMPORTANT: When deploying on Minikube, you may see errors such as CrashLoopBackOff and your application may fail to start. This is typically an indication that the cluster does not have sufficient memory available to it. To resolve this error, make more resources available to the cluster (we recommend a minimum of 3 GB RAM) and try again.

Find how to install MongoDB, Odoo or WordPress in the examples below:

-

To install on Minikube, run the following command:

$ helm install mongodb oci://registry-1.docker.io/bitnamicharts/mongodb --set serviceType=NodePortNOTE: Check the configurable parameters of the MongoDB chart and their default values at the GitHub repository.

-

To install on Minikube, run the following command:

$ helm install odoo oci://registry-1.docker.io/bitnamicharts/odoo --set serviceType=NodePortNOTE: Check the configurable parameters of the Odoo chart and their default values at the GitHub repository.

-

To install on Minikube, run the following command:

$ helm install wordpress oci://registry-1.docker.io/bitnamicharts/wordpress --set mariadb.image=bitnami/mariadb:10.1.21-r0 --set serviceType=NodePortNOTE: Check the configurable parameters of the WordPress chart and their default values at the GitHub repository.

Now, you can manage your deployments from the Kubernetes Dashboard. Follow the instructions below to access the Web user interface.

Step 6: Access the Kubernetes Dashboard

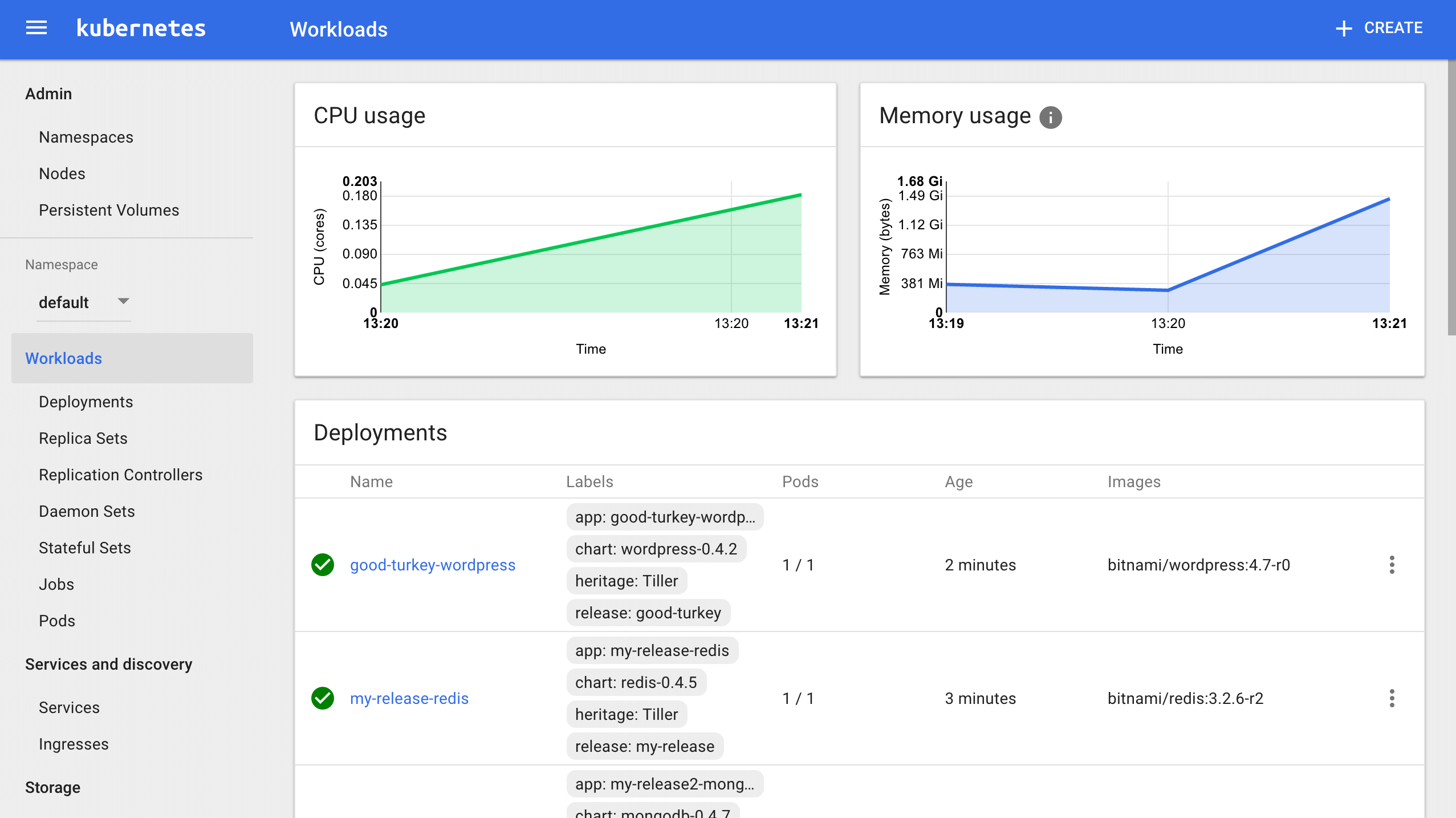

The Kubernetes Dashboard is a Web user interface from which you can manage your clusters in a more simple and digestible way. It provides information on the cluster state, deployments and container resources. You can also check both the credentials and the log error file of each pod within the deployment.

To open the Kubernetes Dashboard, you just need to run the following command:

$ kubectl proxy

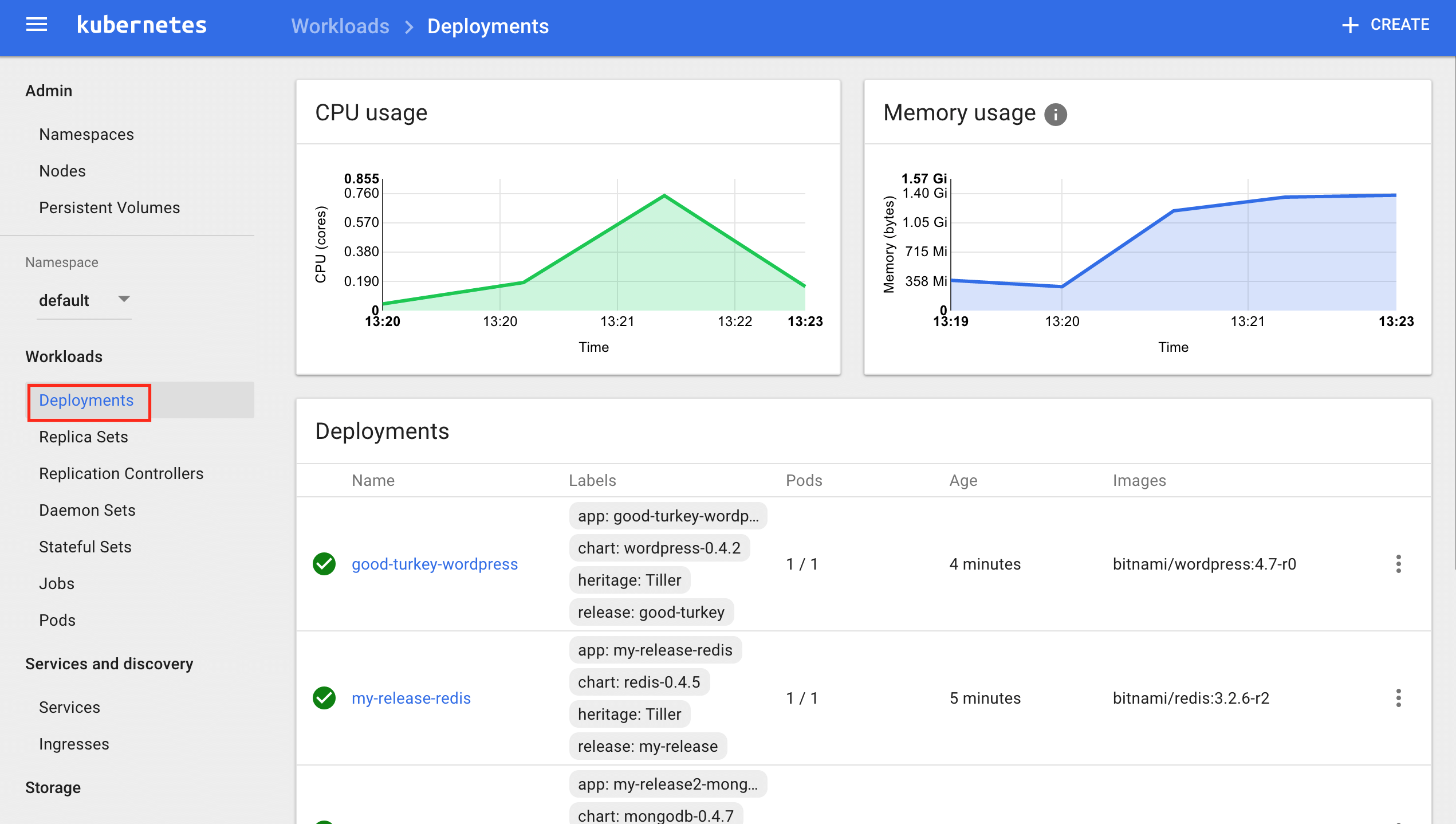

With this command, you will create a proxy server on port 8001 for accessing the Kubernetes Dashboard. It will be available at: localhost:8001/ui. The home screen shows the “Workloads” section. Here you get an overview of the following cluster elements:

- CPU usage

- Memory usage

- Deployments

- Replica Sets

- Pods

From this home screen, you can perform some basic actions such as:

- Monitoring the status of your deployments and pods.

- Checking pod and container(s) logs to identify possible errors during the creation of the containers.

- Finding application credentials.

Monitor the status of Deployments and Pods

Monitor Deployments

- To check detailed information about the status of your deployments, navigate to the “Workloads -> Deployments” section located on the left menu. It shows a screen with a graphical representation of the CPU and memory usage, as well as a list of all deployments you have in your cluster.

- Click each deployment to obtain detailed information of the selected deployment:

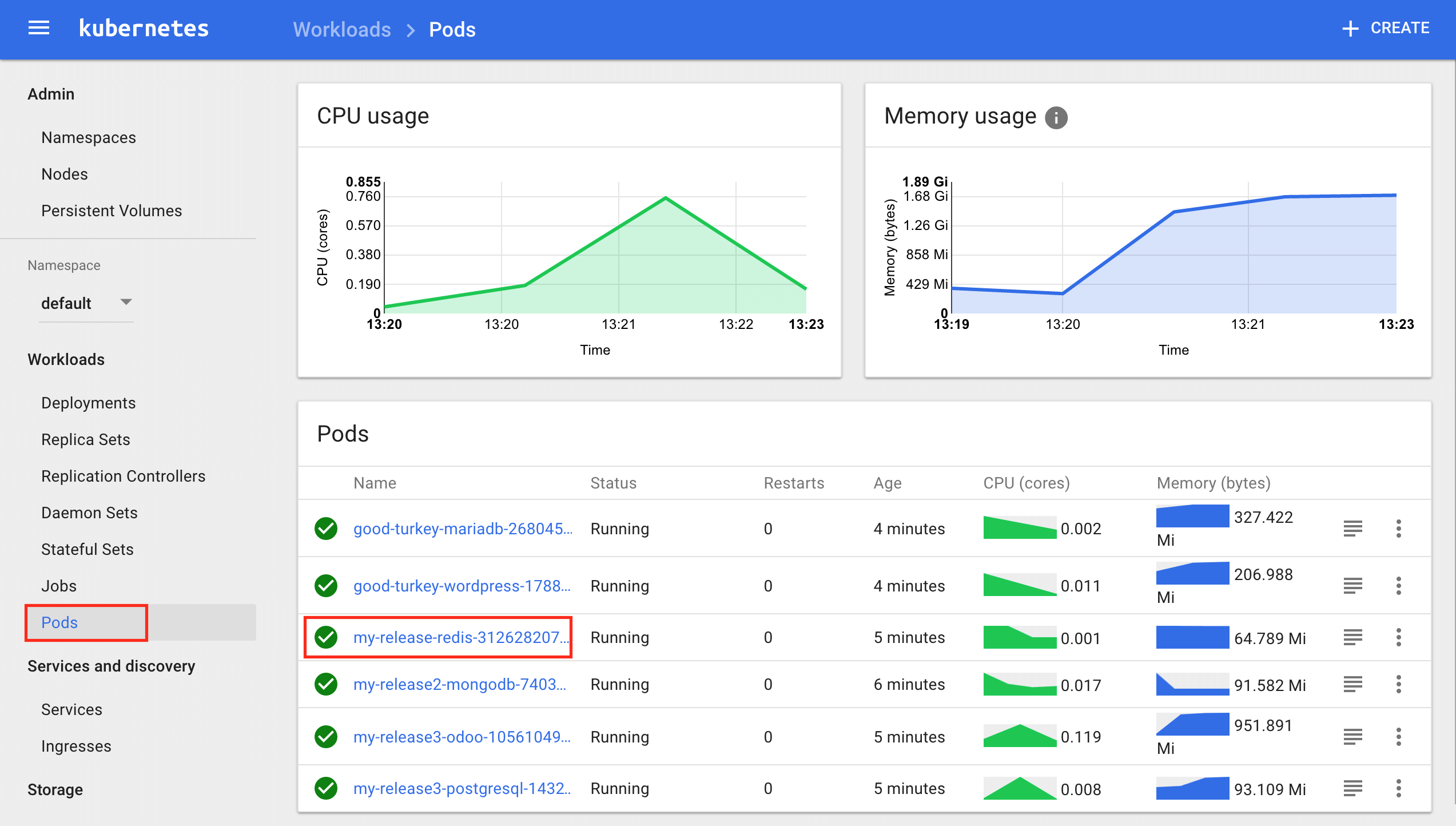

Monitoring pods

Pods are the smallest units in Kubernetes deployments. They can contain one or multiple containers (that need to share resources in order to work together). Learn more about pods.

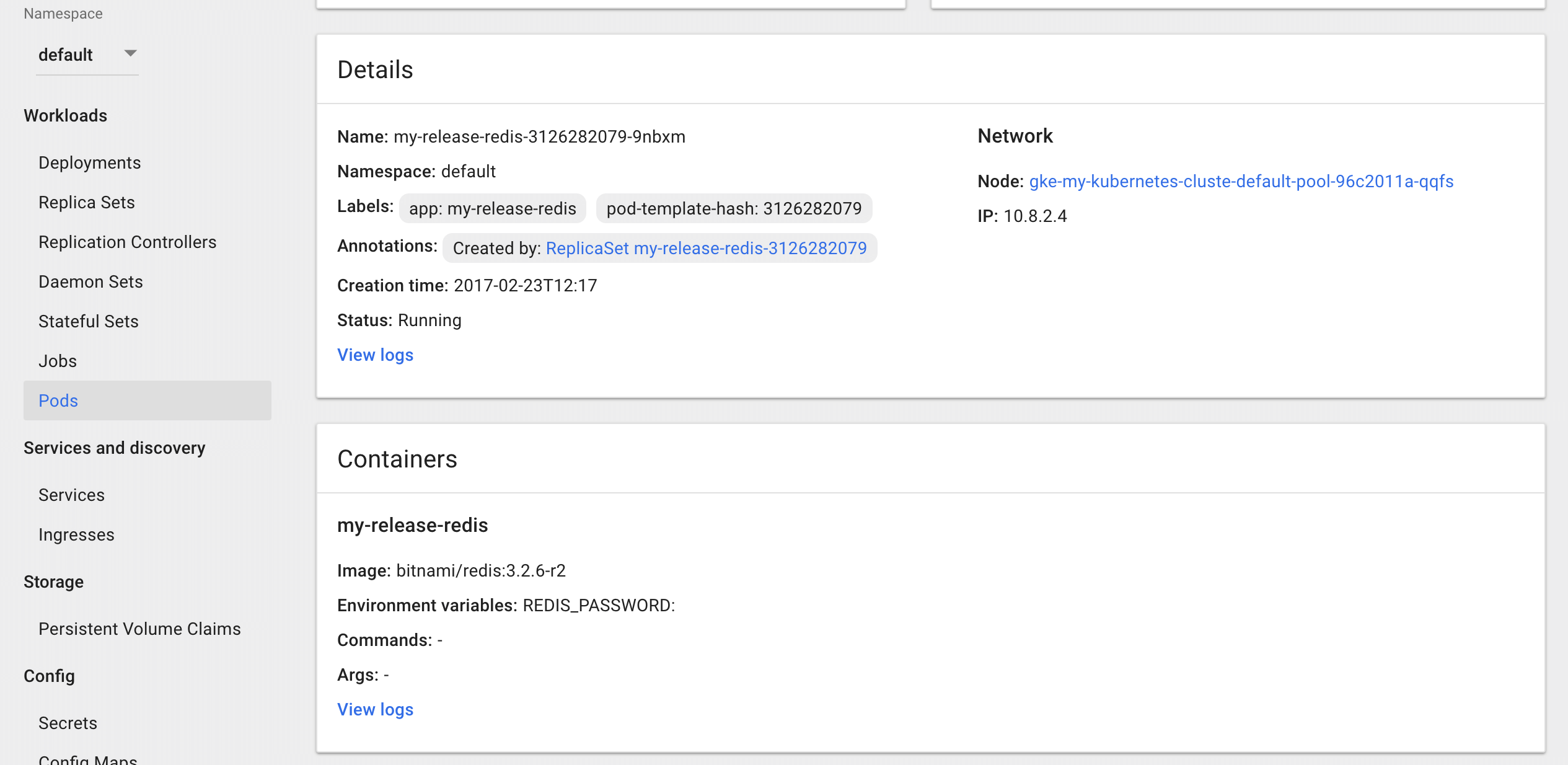

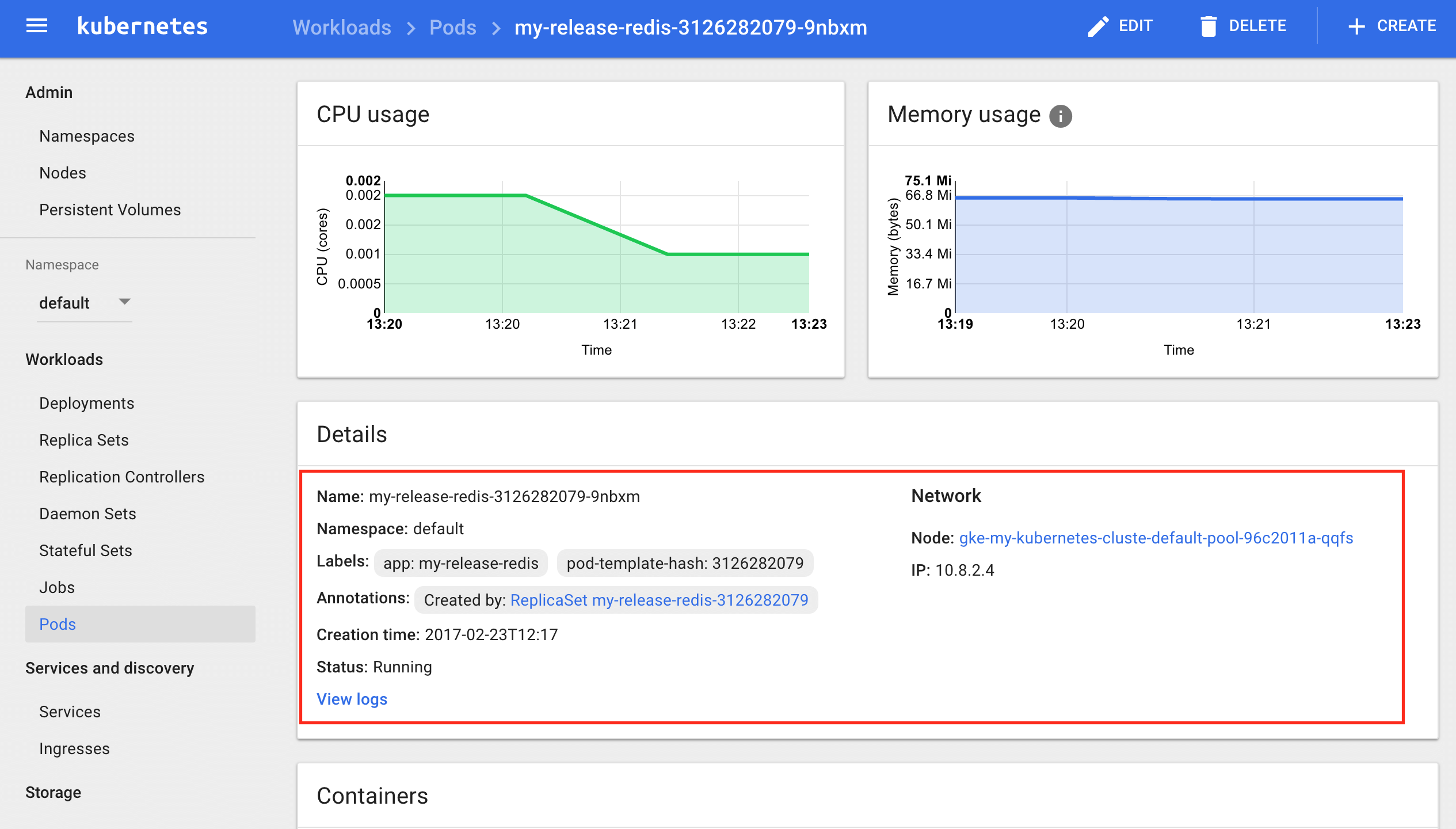

When you click on a pod in the “Workloads -> Pods”, you access the pod list. By selecting a pod, you will see the “Details” section that contains information related to the pod,and a “Containers” section that includes the information related to this pod’s container(s).

Follow these instructions to access pod and container information:

- To check the status of your deployments in detail, navigate to the “Workloads -> Pods” section located on the left menu. It shows the pod list:

- Click the pod you’d like to access further details for.

Check logs

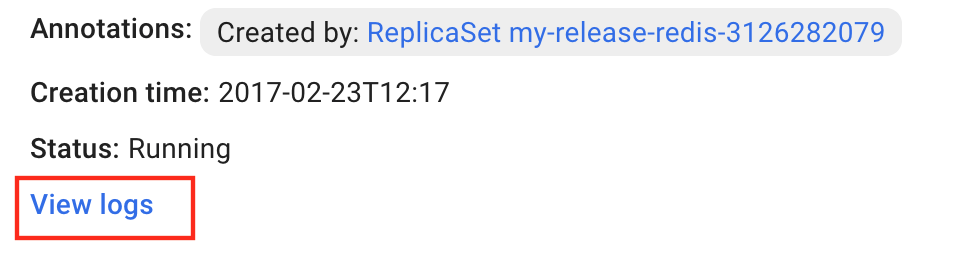

The Kubernetes Dashboard allows you to check the logs of both the pod and any containers belonging to the pod to detect possible errors that might have occurred. To access the logs viewer, follow the steps below:

-

Navigate to the “Workloads -> Pods” section located on the left menu, select the pod you’d like to check from the pod list.

-

In the detail page of the selected pod, you will find a “View logs” link both in the “Details” and “Containers” section. Click the one you want to see:

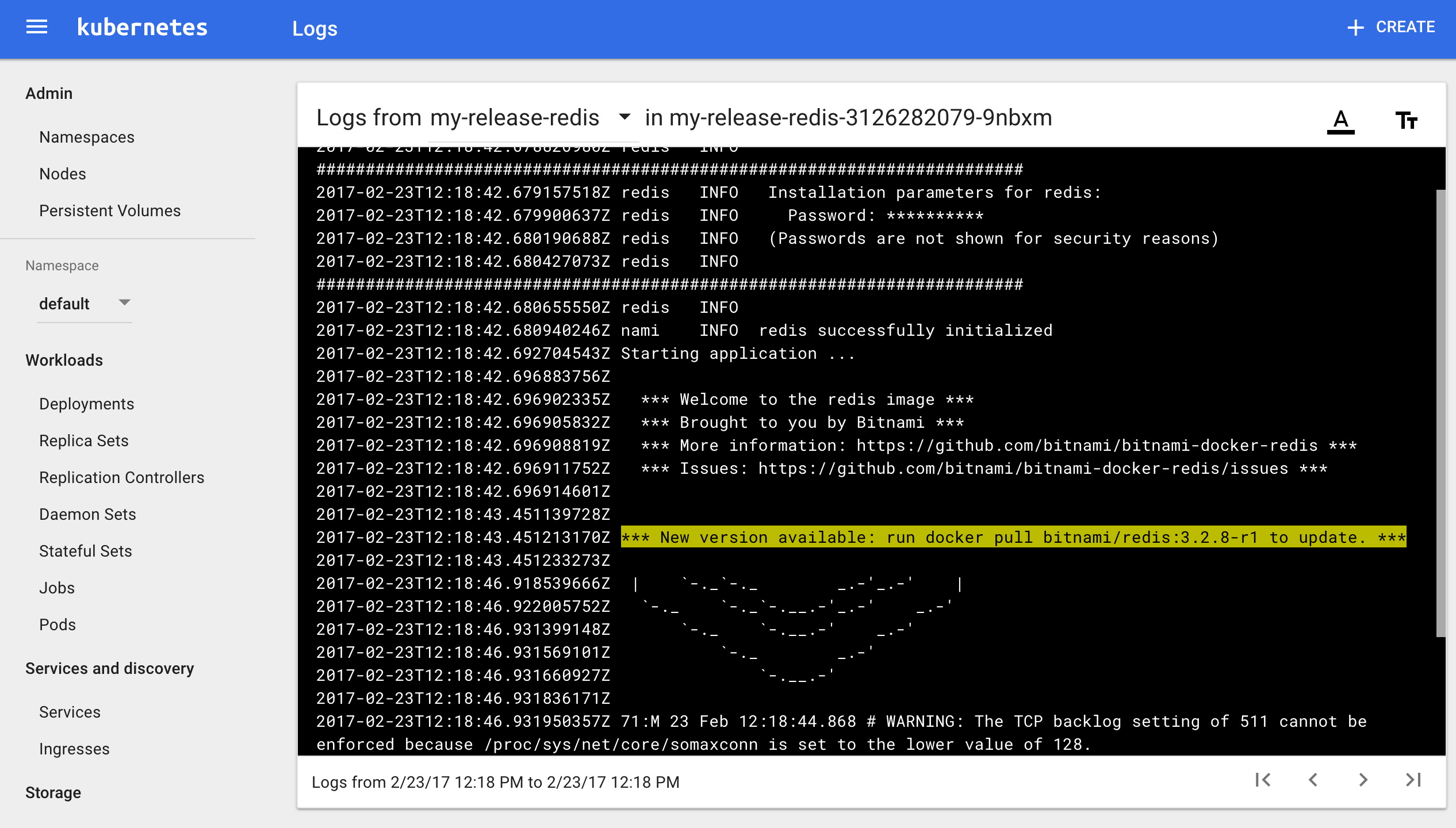

The logs viewer opens:

Find application credentials

The application credentials are shown in the “Notes” section after installing the application chart:

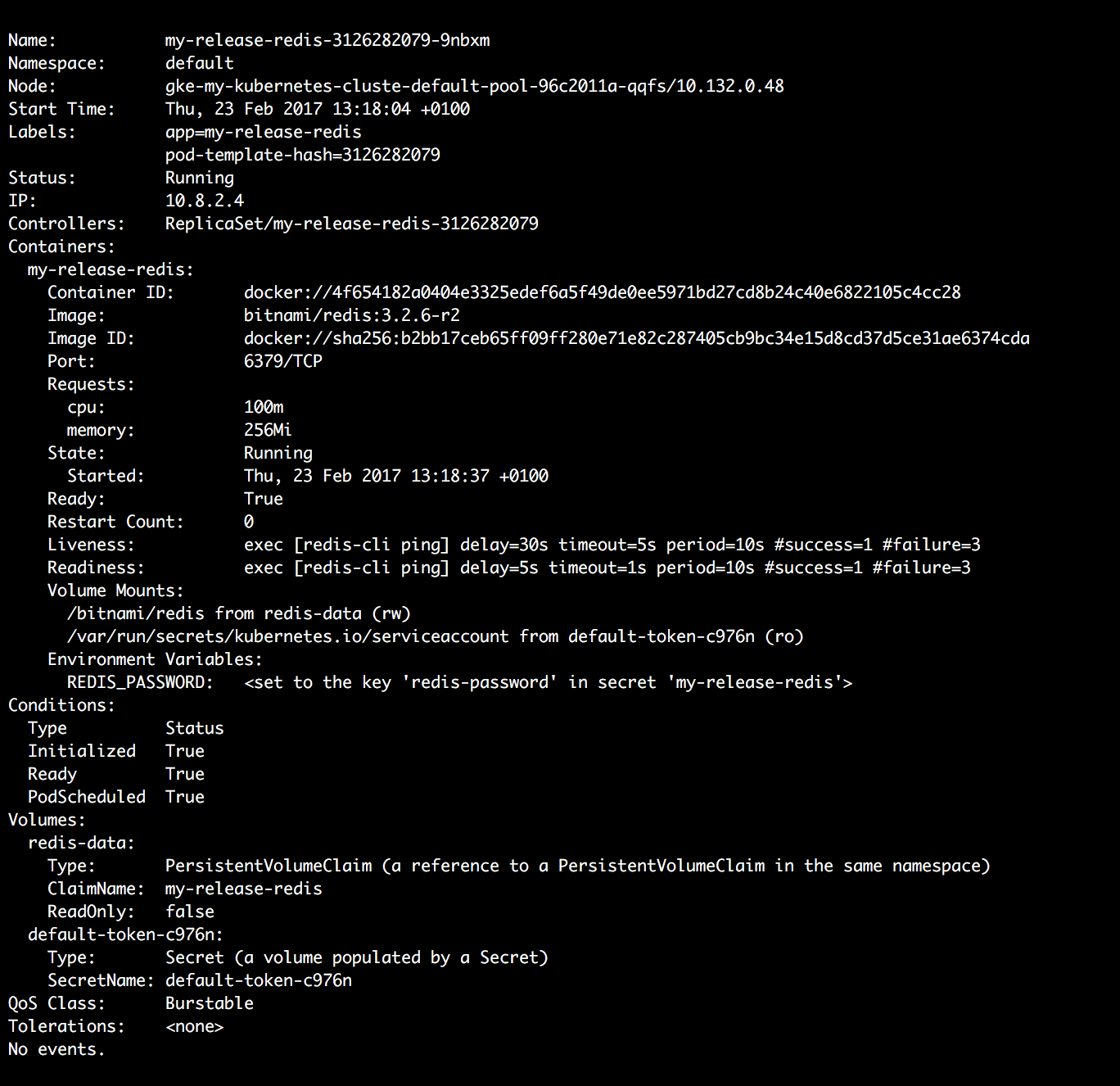

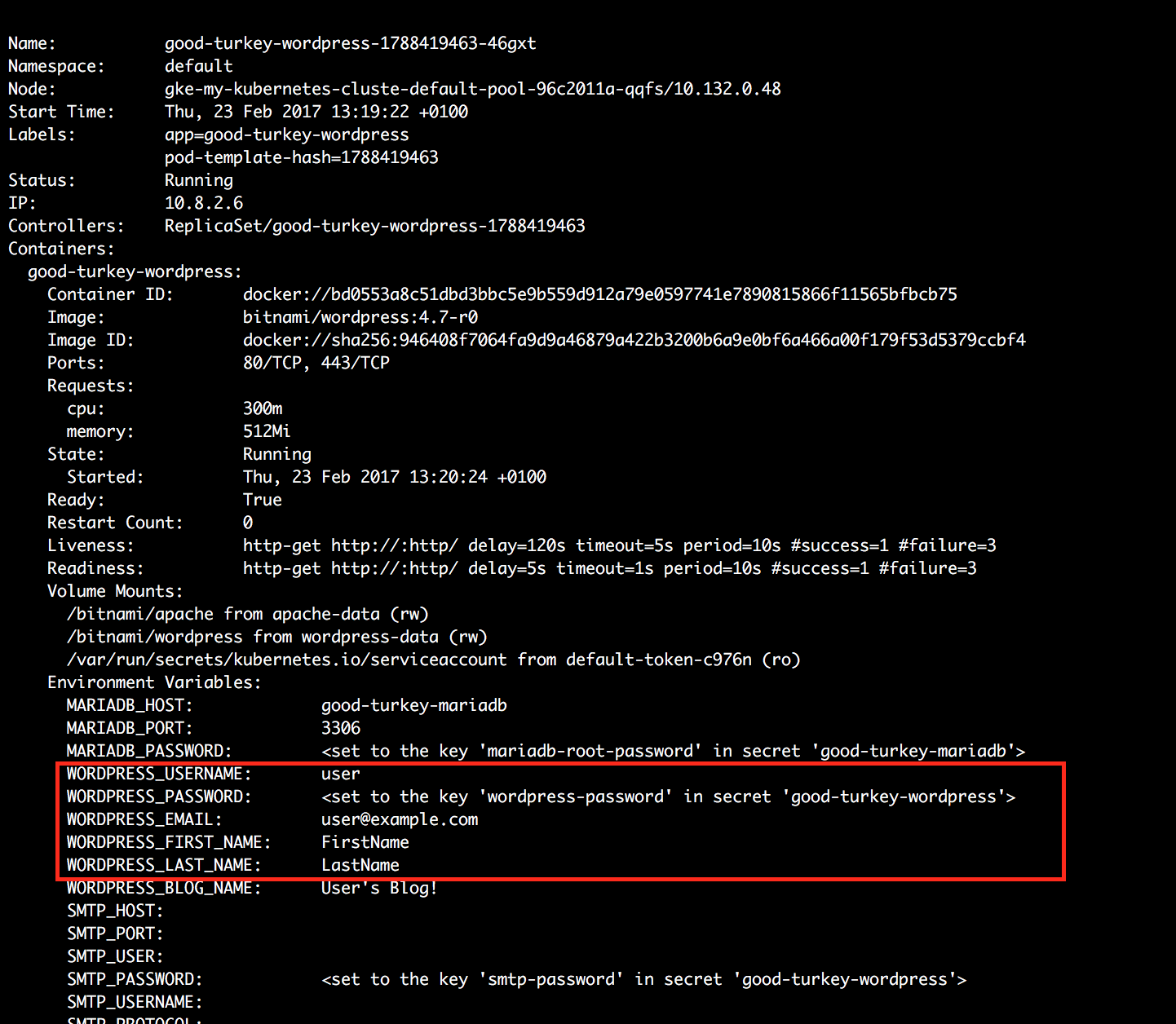

You can get the application username and password at any time by running the following command, replacing POD-NAME with the name of the pod:

$ kubectl describe pod POD-NAME

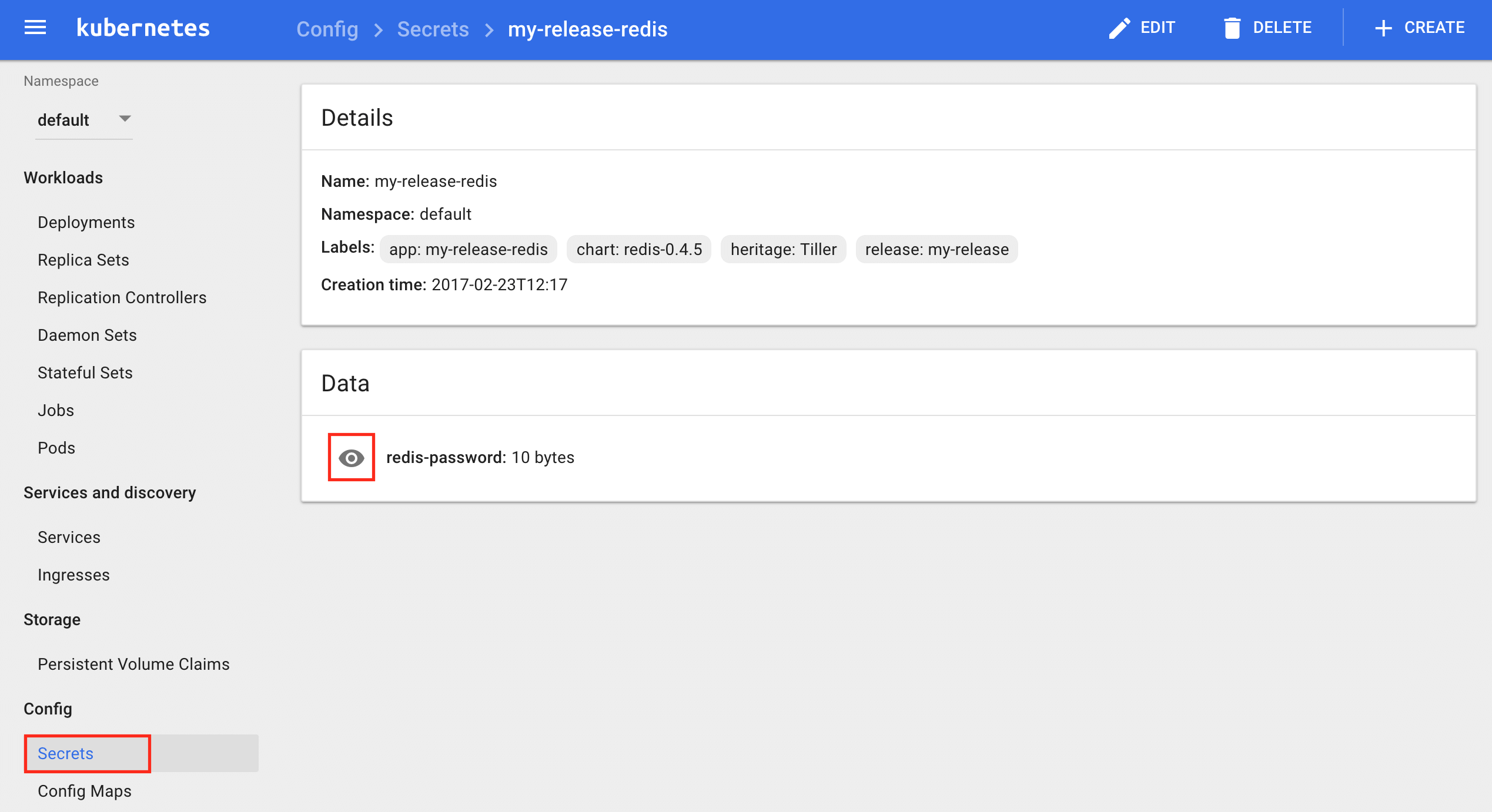

As you can see in the image above, the application password is configured as a secret password. To get it, browse to the Kubernetes Dashboard and follow these instructions:

-

Navigate to the “Config -> Secrets” section located on the left menu.

-

Click the application for which you wish to obtain the credentials.

-

In the “Data” section, click the eye icon to see the password:

Step 7: Uninstall an application using Helm

To uninstall an application, you need to run the helm delete command. Every Kubernetes resource that is tied to that release will be removed.

TIP: To get the release name, you can run the helm list command.

$ helm delete MY_RELEASE

NOTE: Remember that MY_RELEASE is a placeholder, replace it with the name you have used during the chart installation process.

Useful links

To learn more about the topics discussed in this guide, use the links below:

- Production-ready Kubernetes applications by Bitnami

- Intro to deploying your favourite apps on Kubernetes

- Kubernetes

- Helm

- Google Kubernetes Engine

- Minikube

- Bitnami

TIP: If you already have a Kubernetes cluster created with some running application deployments, you can take a step further and try to scale and upgrade them. For detailed instructions, refer to our tutorial on deploying, scaling and upgrading an application on Kubernetes with Helm.

Do you want to learn more about how to deploy Bitnami applications on Kubernetes? Watch the following video for getting started with Kubernetes architecture and Helm charts:

Watch the following video to learn how to install Kubernetes locally: